Preprint on decision-making and large language models

Can large language models help us understand the psychology of risky choice?

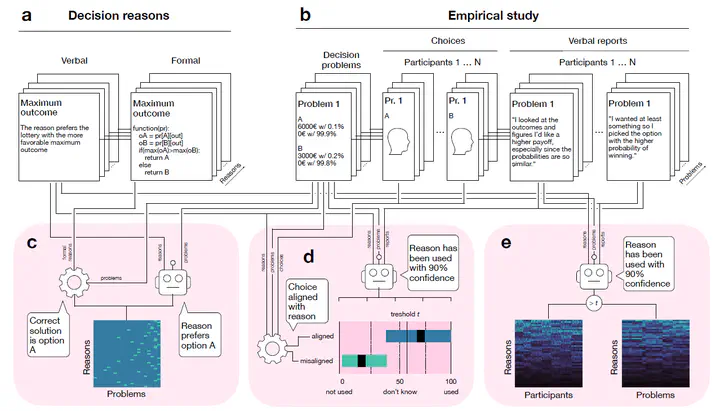

Our colleague Kamil Fulawka is the first author of a new preprint that tackles this question. Together with Ralph Hertwig and Dirk Wulff, he introduces a scalable approach to analyze participants’ free-text explanations of their decisions.

The study shows that LLMs can accurately identify the reasons people give for their lottery choices in more than 92% of cases. These verbal reports reveal systematic patterns: people’s reasoning depends strongly on the structure of the choice problem rather than being fixed across individuals. Models built on these reason profiles also achieve higher predictive accuracy than prospect theory, one of the most established frameworks in decision science.

By demonstrating the scientific value of verbal data, this work opens new avenues for developing context-aware models of human decision-making.

👉 A more detailed description of the analysis, as well as the code and data, can be found here.